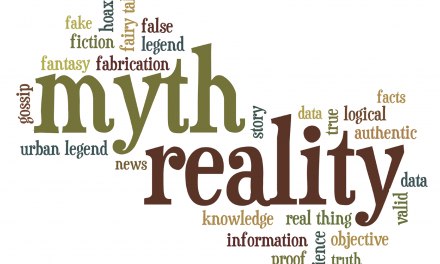

I’ve been stumbling across references to meta-analysis in media explorations of the opioid epidemic, and it’s often presented as the “final word” on a particular question. As far as I can determine, it doesn’t deserve that status, for a number of reasons. It should be considered a helpful tool in research, but one that’s still vulnerable to weaknesses inherent in the research process. Here’s why:

Meta-analysis– defined by one source as a statistical approach that combines results from multiple studies to improve estimates of the size of an effect or to resolve uncertainty when studies disagree– flat out sounds so darn impressive that I’m not surprised most casual readers accept it as law, at least as far as science is concerned.

But like any statistical process, meta-analysis isn’t immune to error. If someone held a gun to my head and forced me to sum it up, I’d probably refer to an old rule from computing: “Garbage in, garbage out.” If something is wrong with the studies in the sample, with the methods used, with the analysis itself, or with interpretation of the results, that can and often does adversely affect the conclusions.

Suppose one really large study is included along with several smaller ones. That will likely tip the results in its favor. If Ginormous Study #1 happens to be flawed methodologically, those errors will carry right over into the meta-analysis process. If the studies include a few that arguably didn’t meet the criteria for inclusion– because they weren’t similar enough, let’s say– that too can affect the results.

Various factors can bias meta-analysis. Let’s say the conclusions are overstated, not strongly supported by the actual data. Or the authors have overestimated the reliability of their methods. Or during interpretation, somebody’s confirmation bias snuck in unobserved and unduly influenced the process. Basically the same problems that plague good science, but meta-analyses are not immune.

Some forms of meta-analysis actually leave themselves wide open by relying on inference to compare various findings (say, the effectiveness of different forms of therapy). It’s much easier to document the effectiveness of a treatment versus no treatment, than it is to show treatment X is clearly more effective than treatment Y.

Not that I reject the notion of meta-analysis as a tool in science– that would be silly– but I certainly don’t take it as gospel. To me, science, at least good science, simply doesn’t allow it.