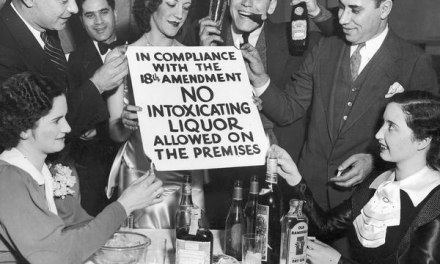

We’ve been reading quite a bit in the media about links between various health problems and practices. Like the one that connected bacon and hot dog consumption to cancer. The popular coverage was a bit over the top, as you can see here, contrasted with this more measured analysis.

I’ll leave your diet choices up to you, but research like this does help illustrate the difficulty in accurately determining the cause of something (like, say… addiction) based on research studies. That’s because of the difference between correlation and causation. In health research, correlation is a statistical relationship between two phenomena, whereas cause means that one results in the other.

In some research, statistical methods are used to identify trends within an existing population– often a large number of persons who responded to an online survey or phone interview. This type of research is great for identifying areas for possible future investigation. It’s not very helpful when it comes to establishing a clear causal relationship between a variable and an outcome.

Population studies take advantage of data that’s already been collected to investigate topics of interest. Example: The question of whether use of antidepressants appears to correlate with a reduction in depression symptoms.

But by its very nature, the sample makes it impossible for us to eliminate all the other possible factors that could influence our conclusions. For that, most scientists look to controlled experiments.

In the best sort of research, scientists carefully select their sample, assign subjects at random to study group or control group, and take steps to eliminate the influence of bias. In a medication study, for example, that can include blinding, or leaving the subject in the dark as to whether she’s receiving the med or placebo. Or even double blinding, where the researcher doesn’t know, either.

It’s possible to identify a link between an outcome and a possible risk factor that is not confirmed by subsequent controlled experiments. But one or both studies may have methodological problems, so scientists generally wait for more research to identify a significant preponderance of the evidence. That’s what the WHO did before issuing their recommendation for reduced red meat consumption.

Problem is, we in the general public don’t like being made to wait. We want answers, and we want them to be final. We don’t want to read something authoritative-sounding in the newspaper, only to have it contradicted a few years later. Yet even with the precautions taken, that still happens.

It’s certainly not going to hurt us to eat less red meat. But the argument about the quality of the research is just beginning, and will probably continue for decades.

Update, 05/09/2016: This video from John Oliver pretty much covers it all and is way more amusing.